This article explains the idea of ‘model collapse’. According to Nicolas Papernot, “It’s kind of a reinforcing feedback loop where you only listen to the majority and you start forgetting whatever things were said less often. […]There can be oddities where something that you start generating is actually not that common, and so it just starts reinforcing its own mistakes.”

Model collapse is what happens to AI models (such as LLMs, but also text-to-image generators) that are trained on data scraped from the web. We can already see generative texts and images populating the web; so any models scraped and updated from 2023 onward risk being polluted with such (already impoverished) data and a few iterations later possibly incapable of generating anything of value at all.

Seems that Lotman’s idea of the semiosphere and the importance of periphery in generating (truly) new information and driving cultural development and change are more timely than ever now. Don’t ever think you can ‘delete’ the peripheral/subaltern perspectives—they are absolutely essential for the preservation of the world system (life, culture) as a whole.

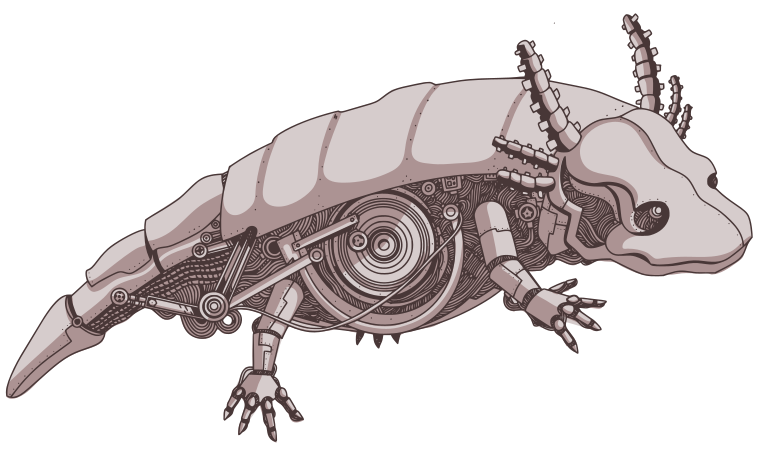

ΤechnoΣημειωτική

ΤechnoΣημειωτική